Making computing

always-available.

I am an Associate Professor in Computer Science at the University of Bath, where I lead the Advanced Interaction and Sensing (AIS) Lab. I also hold an honorary appointment with NHS Bristol Trust and am a Fellow of the Association of British Science Writers.

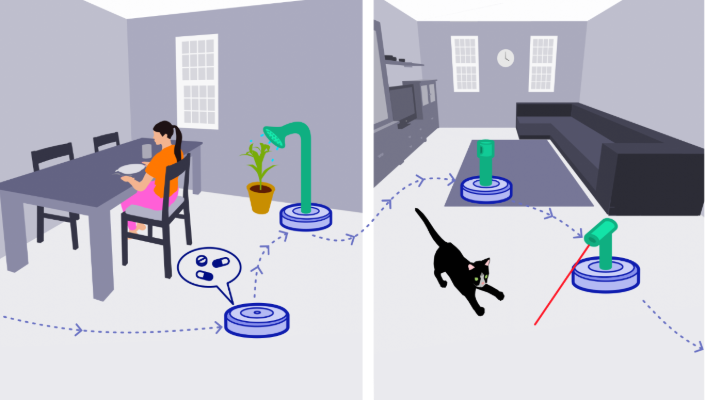

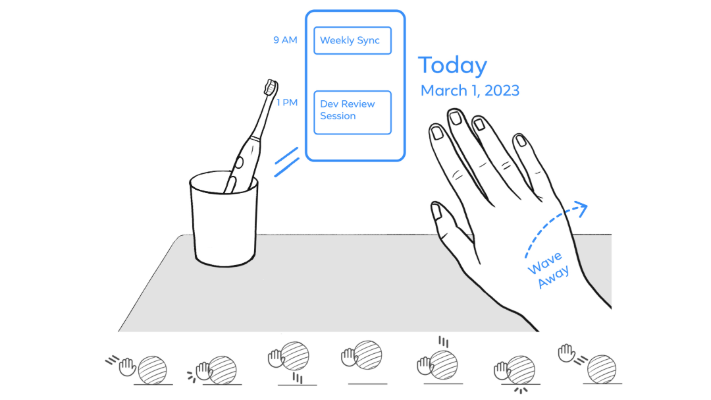

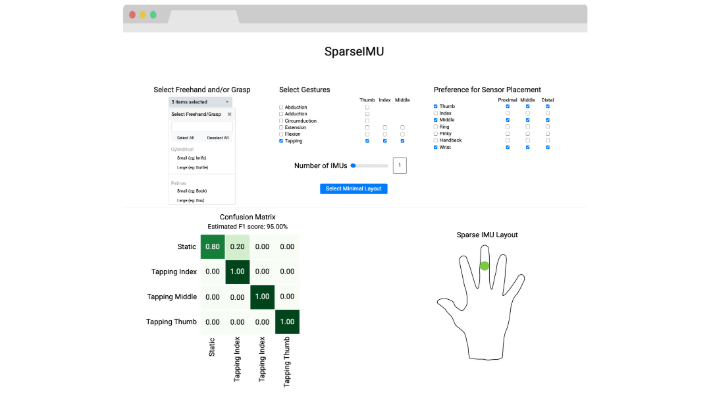

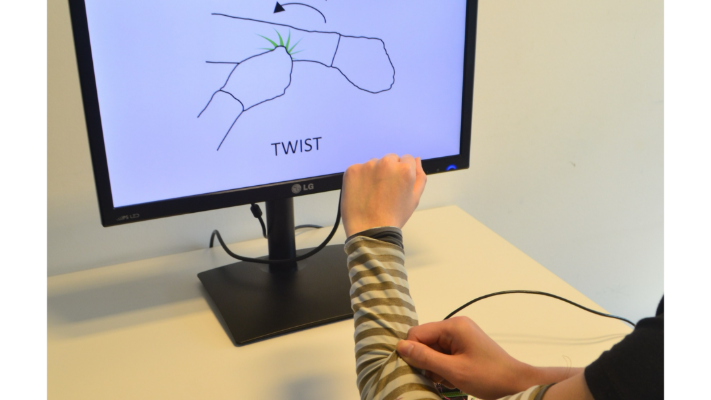

My research bridges Machine Learning and Human–Computer Interaction to enable always-available computing. At the AIS Lab, we develop practical solutions in three areas:

Our lab specializes in developing novel approaches to interaction design, sensing, and AI, creating new ways for people to engage with computing systems, especially when hands are busy or attention is focused elsewhere. Our research is published in top venues including ACM CHI, UIST, DIS, and TOCHI, and has been featured by major media outlets such as the BBC, NPR, and The Independent.

BackgroundI received my Ph.D. from the Human–Computer Interaction group at the Max Planck Institute and Saarland University in Germany. My experience spans both industrial and academic labs, enabling me to translate novel research into scalable technologies with real-world impact in healthcare and consumer applications.

Outside the lab, I collect fountain pens, enjoy clever puns, and love strolling in new cities. I welcome connections regarding student opportunities and research partnerships, or simply to chat about my experiences living across seven countries. Please feel free to contact me.